GPU Compute

NVIDIA H200 GPU

Cost Effective Resources & Infrastructure

Reduce your cloud costs without sacrificing performance or reliability. mCloud delivers enterprise grade cloud infrastructure at a fraction of the cost of AWS, Google Cloud, or Azure.

Fault-Tolerant Tier IV Data Centre

Micron21 operates Australia’s first Tier IV-certified data centre, offering 100% uptime, redundant power, and high availability architecture.

24/7 Australian-based Expert Support

Our cloud specialists provide 24/7 Australian-based support, ensuring seamless deployments and efficient troubleshooting.

NVIDIA H200

Supercharging AI and HPC

Minimum Specifications

Provided as a High-Availability mCloud Virtual Cloud Server

- GPU: NVIDIA H200 (141 GB)

- GPU Compute: Dedicated

- vCPU: 12 Cores - XEON Gold

- RAM: 64 GB - DDR4

- Storage: 500 GB - NVMe SSD

- Bandwidth: 2 TB p/m

- IP Address: Included

- DDoS Protection: Shield

Introduction

Higher Performance

With

Larger & Faster Memory

Our mCloud platform utilises NVIDIA H200 GPUs - based on the NVIDIA Hopper architecture - which is the first GPU to offer 141 gigabytes (GB) of HBM3e memory at 4.8 terabytes per second (TB/s). That’s nearly double the capacity of the NVIDIA H100 GPU with 1.4X more memory bandwidth.

The H200’s larger and faster memory accelerates generative AI and LLMs, while advancing scientific computing for HPC workloads with better energy efficiency and lower total cost of ownership.

Why Micron21

Why Choose Micron21 for GPU Compute?

Being Australia's first Tier IV data centre, you can rest assured our GPU Compute offerings provide reliable, secure, high-calibre performance.

High-Speed Compute &

NVMe SSD Storage

Our GPU Cloud Servers utilise Intel XEON Gold CPUs and ultra-fast NVMe SSDs to deliver high-performance compute and storage. These ensure ultra fast processing speeds and rapid access to resources for even the most-demanding of applications.

DDoS Protection

All GPU Cloud Servers with Micron21 are protected via our comprehensive DDoS platform which employs multiple layers of protection to inspect, scan and filter traffic at our global scrubbing centres.

Tier IV Data Centre

Tier IV is the highest uptime accreditation that a data centre can have. Ensure the availability of your systems by combining bulletproof dedicated servers with Micron21. We're Australia's first Tier IV accredited data centre.

ISO Certified

We're up-to-date with the latest in security standards. This includes being ISO 27001, 27002, 27018 and 14520 certified; PCI compliant; and IRAP assessed.

Data Sovereignty

We're proudly 100% Australian owned and operated. In this cyber age with concerns over foreign influence, the physical sovereignty of your data is the ultimate peace of mind.

Australian Support

Our dedicated Australian-based support technicians are located in the Micron21 Data Centre. We aim to completely remove the complexity of IT management for our customers with 24/7 access available.

Cloud GPUaaS

Cost Effective and High Performance

GPU-as-a-Service

Our mCloud platform offers the ability to integrate powerful NVIDIA GPUs directly into your virtual machines through GPU passthrough technology, allowing virtual machines to access the full capabilities of a physical GPU and providing near-native performance

Contended GPU

Through time-sliced access to GPU compute with guaranteed minimums, clients with non-time critical workloads or limited budgets can now get affordable access to cloud-based GPU compute

Dedicated GPU

For those who require their own dedicated GPUs, our platform supports NVIDIA A100, NVIDIA RTX A6000, NVIDIA H100, and NVIDIA H200 GPUs, all designed to support any workload you need to run in the cloud

Features

Key Features of NVIDIA H200 GPUs

NVIDIA Hopper Architecture

Learn about the next massive leap in accelerated computing with the NVIDIA Hopper™ architecture. Hopper securely scales diverse workloads in every data center, from small enterprise to exascale high-performance computing (HPC) and trillion-parameter AI—so brilliant innovators can fulfill their life's work at the fastest pace in human history.

Reduce Energy and TCO

With the introduction of H200, energy efficiency and TCO reach new levels. This cutting-edge technology offers unparalleled performance, all within the same power profile as the H100 Tensor Core GPU. AI factories and supercomputing systems that are not only faster but also more eco-friendly deliver an economic edge that propels the AI and scientific communities forward.

Unleashing AI Acceleration for Mainstream Servers

The NVIDIA H200 NVL is the ideal choice for customers with space constraints within the data center, delivering acceleration for every AI and HPC workload regardless of size. With a 1.5X memory increase and a 1.2X bandwidth increase over the previous generation,customers can fine-tune LLMs within a few hours and experience LLM inference 1.8X faster.

Supercharge High-Performance Computing

Memory bandwidth is crucial for HPC applications, as it enables faster data transfer and reduces complex processing bottlenecks. For memory-intensive HPC applications like simulations, scientific research, and artificial intelligence, the H200’s higher memory bandwidth ensures that data can be accessed and manipulated efficiently, leading to 110X faster time to results.

Unlock Insights With

High-Performance LLM Inference

In the ever-evolving landscape of AI, businesses rely on large language models to address a diverse range of inference needs. An AI inference accelerator must deliver the highest throughput at the lowest TCO when deployed at scale for a massive user base. The H200 doubles inference performance compared to H100 GPUs when handling large language models such as Llama2 70B.

Conclusion

Experience GPU-Accelerated

Cloud Computing

Our mCloud platform, built on robust OpenStack architecture, now offers the ability to integrate powerful NVIDIA GPUs directly into your virtual machines through GPU passthrough technology. This allows virtual machines to access the full capabilities of a physical GPU as if it were directly attached to the system, bypassing the hypervisor’s emulation layer and providing near-native performance.

Enhance your cloud capabilities with GPU acceleration by integrating dedicated GPUs into your mCloud virtual machines and take your computing to the next level.

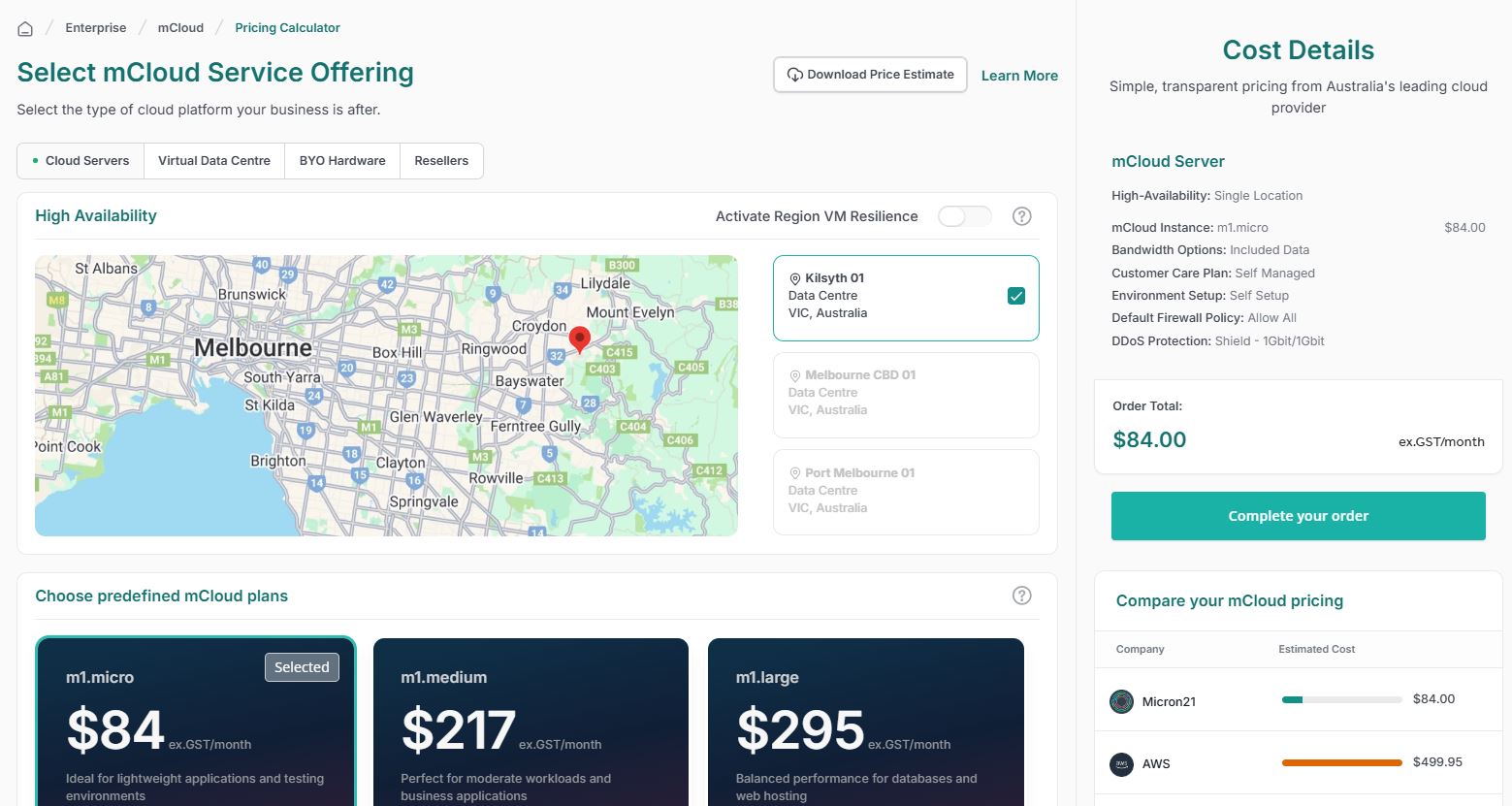

See How Much You Can Save with mCloud

Customize your cloud and compare costs instantly against AWS, Google Cloud, and Microsoft Azure. Get more for less with enterprise-grade performance.

- Transparent Pricing: No hidden fees or surprises.

- Enterprise-Grade for Less: High performance at lower costs.

- Instant Comparison: See real-time savings.