What is GPU Compute? The compute that’s empowing AI workloads around the world

30 Mar 2026, by Micron21

Nowadays, it seems like every other article from the major news publications is about AI. And you’ll have to forgive us, as this article too will be tangentially related to that topic. However, in our case, we’ll be coming more from the angle of explaining what “GPU compute” is. We'll talk about how it’s a technology that helps users with computationally taxing tasks such as graphics rendering, video editing, scientific simulations (and much more), but we'll delve in further, explaining why GPU compute is the main IT resource required for AI models.

Now, you might be forgiven for thinking that it is actually RAM that is the most important resource required for AI models - especially given the ongoing massive shortages caused by large AI companies purchasing all existing stock and contracting with manufacturers for even more, and with RAM prices surging by as much as 55% in Q1 2026 compared to the previous quarter1. However, it’s actually GPU compute that is powering AI workloads all over the world. This is because, not only is GPU compute required to run the models that the people interact with on a day-to-day basis, but it’s also what is required to train those models in the first place.

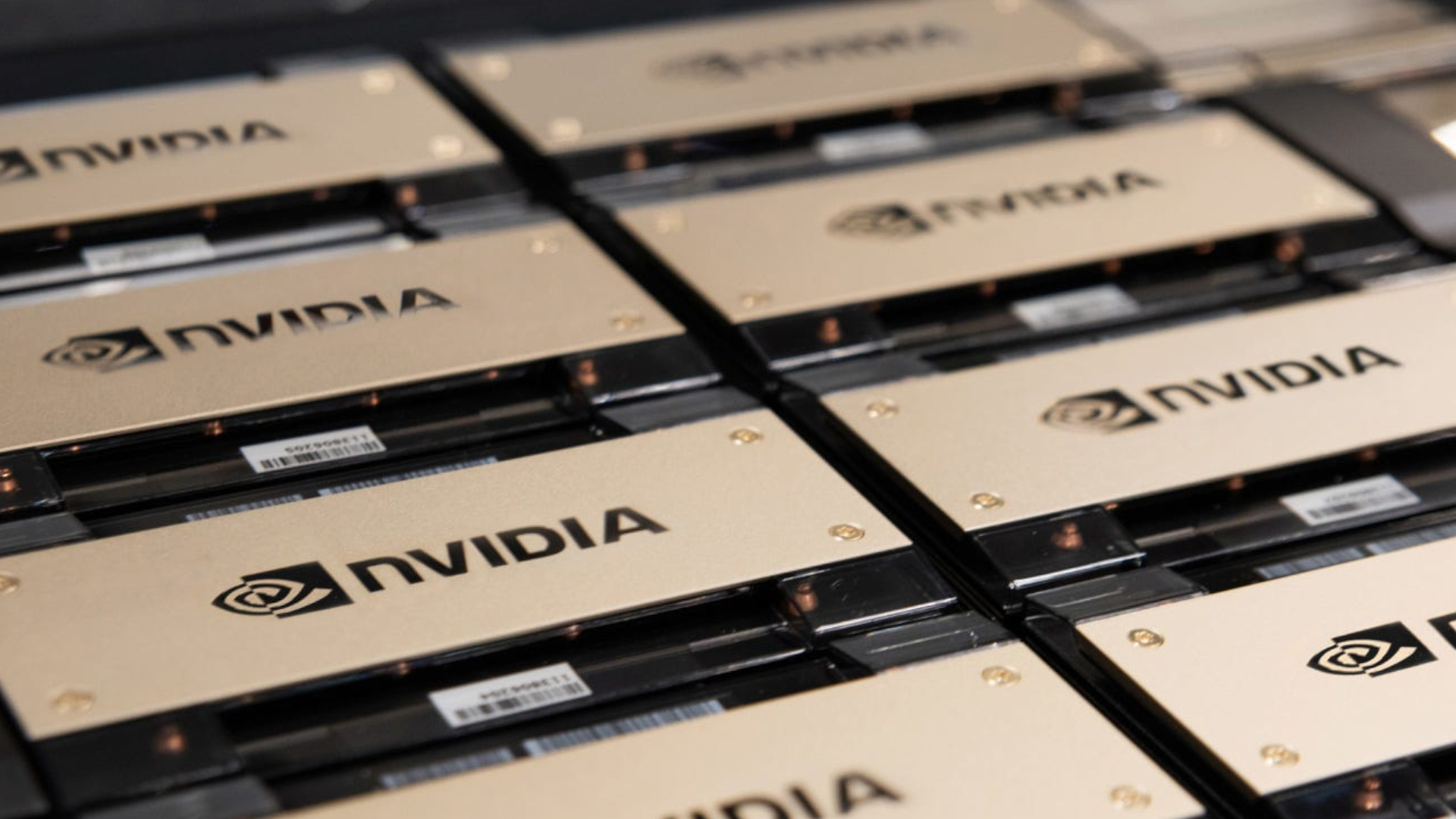

And there's a reason why NVIDIA – the world's leading manufacturer of GPUs – is now noted as being the largest company in the world by market capitalisation, recently becoming the first publicly traded company to hit $4 trillion in market value2 back in July 2025, before surpassing $5 trillion just three months later in October.3 This kind of meteoric growth doesn't just happen by accident – it's a direct reflection of just how critical GPU compute has become to the technology landscape.

That's why this month we'll be talking about GPU compute – what it is, how it differs from CPU compute, what its strengths are, and the different ways it's available.

What is GPU Compute?

Before we can talk about GPU compute, we first need to understand "compute" more generally – starting with the CPU, which has been the workhorse of computing for decades.

A CPU - short for Central Processing Unit - is the primary processor in any computing device. It's designed to handle a wide variety of tasks sequentially and is optimised for complex, logic-heavy operations. Whether it's running an operating system, executing application code, managing file systems, or handling network requests – the CPU is what makes all of that possible. CPUs are incredibly versatile, with their strength lying in their ability to handle diverse workloads that require complex decision-making and branching logic. Think of a CPU as a highly skilled generalist that can do almost anything, as long as it tackles problems one step at a time sequentially (or a small number of steps in parallel, with modern multi-core designs).

However, as computing advanced, certain workloads emerged that the CPU simply wasn't optimally designed for. Specifically, these were tasks that involved performing the same mathematical operation across massive amounts of data simultaneously, such as rendering the thousands of individual pixels on a screen. These tasks were bottlenecked by the CPU's sequential nature, and is what led to the invention of the GPU – short for Graphics Processing Unit.

The history of dedicated graphics hardware stretches back to the early 1980s, with early graphics display systems such as IBM's Monochrome Display Adapter (MDA) in 1981, and the Video Graphics Adapter (VGA) standard three years later4. However, the modern GPU era truly began in the mid-1990s with the introduction of the first 3D graphics accelerator cards. In 1996, 3dfx Interactive released their Voodoo1 graphics chip, which pioneered the shift towards dedicated 3D rendering hardware. NVIDIA, having entered the market in 1993, gained significant prominence in 1997 with the RIVA 128, which combined 3D acceleration with traditional 2D and video capabilities. It was then in 1999 that NVIDIA popularised the term "GPU" with the release of the GeForce 256, defining it as "a single chip processor with integrated transform, lighting" capabilities.

From that point on, GPUs evolved rapidly. They became the backbone of the gaming industry, allowing for increasingly realistic graphics, complex 3D worlds, and smooth framerates that would have been utterly impossible with CPUs alone. Beyond gaming, GPUs also became indispensable tools for professionals in fields such as graphic design, 3D modelling, video editing, and scientific visualisation. All of these workloads share a common characteristic – they involve performing relatively simple mathematical calculations, but across enormous datasets simultaneously. This is known as "parallel processing" and it's the fundamental strength of GPU compute.

To put it in perspective, where a modern CPU might have between 8 and 64 cores, a modern GPU can have thousands! NVIDIA's H100, for example, contains a staggering 16,896 CUDA cores. Each individual core is far simpler than a CPU core and can't handle complex branching logic on its own, but when working together in parallel across thousands of cores simultaneously, the throughput for suitable workloads is orders of magnitude greater than what a CPU could ever achieve!

The Growing Demand for GPU Compute

The demand for GPUs has been ever-increasing in recent years, driven by successive waves of new use-cases that have each, in turn, strained global supply.

The first major driver of GPU demand, beyond that of gaming and professional graphics work, was the rise of cryptocurrency mining. As cryptocurrencies like Bitcoin and Ethereum gained traction, miners discovered that GPUs were significantly more efficient at performing the repetitive mathematical calculations required for mining than CPUs were. This created a gold-rush effect, with miners purchasing GPUs in enormous quantities.

In Q1 2021 alone, cryptocurrency miners purchased approximately 700,000 GPUs5. The effect on the consumer market was dramatic. Graphics cards like NVIDIA's RTX 3080, which had a manufacturer suggested retail price (MSRP) of $699 USD, were regularly selling for upwards of $2,400 USD on the secondary market6. Unfortunately, general consumers and gamers found themselves unable to acquire GPUs at anywhere near reasonable prices, and this was further compounded by COVID-19 related disruptions to manufacturing and global shipping.

This cryptocurrency-driven shortage was just the beginning of a pattern we’re unfortunately becoming all too familiar with. Another example being the increasing price of RAM due to AI demand, with memory manufacturers shifting their production towards AI companies rather than consumer electronics. This has also been the latest and most significant force driving GPU demand, with the ever-growing usage and adoption of AI causing dramatic price increases and limited supply.

The AI landscape today encompasses a wide range of different model types, each with varying demands on GPU compute. At the more accessible end, we have large language models (LLMs) such as ChatGPT, Claude, and Gemini – all of which are primarily focused on text-based tasks such as answering questions, generating content, and assisting with coding. These models, while large and resource-intensive to train, are comparatively lighter on GPU resources during inference (the phase where the model is actively being used) when compared to more visually demanding AI models.

Moving up the scale, we have image generation models such as Stable Diffusion, DALL-E, and Midjourney – all of which generate images from text descriptions. These require substantially more GPU compute than text-only models, as they need to process and generate visual data pixel by pixel. A capable LLM can run locally on a GPU with as little as 8GB of VRAM – which is memory that is dedicated just for use by a graphics card. Image generation models however require 16GB or more for reasonable performance7.

Then there are video generation models, which represent yet another significant leap in GPU requirements. Models that generate video need to not only produce high-quality individual frames, but also maintain temporal coherence across potentially hundreds of frames in sequence. This makes them substantially more demanding – typically requiring 24GB or more of VRAM for full-quality generation7, and even then, generating just a few seconds of video can take minutes on even the most powerful consumer GPUs.

However, video generation is not the limit of what AI is capable of. Beyond pre-rendered video, a new frontier of AI has emerged – that of "world models". World models are AIs that are able to generate fully interactive and explorable worlds in real-time, rather than simply outputting a static video. One of the earliest and most striking demonstrations of this was Google Research's GameNGen, developed in collaboration with Google DeepMind and Tel Aviv University, which demonstrated an AI capable of simulating the classic game DOOM entirely through a neural network8. Trained on hundreds of hours of gameplay footage, GameNGen was able to generate new frames in real-time based on player inputs, achieving 20 frames per second and a visual quality so convincing that human testers had difficulty distinguishing the AI-generated gameplay from the real thing.

Similarly, Microsoft released MineWorld in 2025, an open-source real-time interactive world model trained on Minecraft footage9. Using a visual-action autoregressive transformer, MineWorld takes paired game scenes and player actions as input, generating subsequent scenes at 4-7 frames per second – outperforming existing open-source diffusion-based world models.

More recently, Google's Genie 3 has pushed this frontier even further, demonstrating the ability to generate interactive 3D environments from nothing more than text prompts, images, or sketches10. Genie 3 outputs at 720p resolution at 24 frames per second with real-time interactivity, creating explorable worlds that exhibit emergent physics, object permanence, and realistic spatial relationships. Whilst still in limited research preview as of mid-2025, it represents the direction that AI is heading – and it's a direction that demands extraordinary amounts of GPU compute.

What Types of GPU Compute Are Available?

As you can see, with the AI frontier continually pushing the boundaries of what's possible, it's unlikely that the demand for GPU compute is going to subside in the foreseeable future. Data centre GPU lead times have in some cases extended to 36-52 weeks11. That's why it's good to know the different ways you can access GPU compute, should you ever need to in the future.

Here at Micron21 we offer a variety of ways for our customers to access GPU compute, including:

Dedicated Servers with Graphics Cards

For those who want the most control and the highest possible performance, GPU Dedicated Servers provide physical servers equipped with enterprise-grade NVIDIA GPUs, directly attached to the hardware. These servers can either be leased on a monthly basis or purchased outright, depending on what works best for your budget and requirements.

Leasing provides the benefit of lower upfront costs and the flexibility to upgrade as newer GPU generations become available, without being tied to hardware that may become outdated. Purchasing outright, on the other hand, offers significant long-term cost savings for those with established and stable workloads – as you avoid the ongoing monthly costs once the initial investment has been recouped. Our dedicated server plans come equipped with Dell PowerEdge servers, dual Intel Xeon Gold CPUs, a minimum of 256GB of DDR4 RAM, and your choice of NVIDIA GPUs including the T4, A10, A16, and L40S – with both single and dual GPU configurations available.

Cloud-based GPU Compute

For those who prefer the flexibility and scalability of virtualised infrastructure, our mCloud GPU platform offers cloud-based GPU compute powered by enterprise-grade NVIDIA GPUs – including the A10, A100, RTX A6000, H100, and H200. A virtualised approach offers several key benefits, including the ability to scale resources up or down as demand changes, the convenience of not needing to manage physical hardware, and also access to the High-Availability (HA) protections built into our mCloud platform.

Within our mCloud GPU offering, we provide both dedicated and contended compute options. With dedicated GPU compute, you get the full, exclusive power of one or more physical GPUs assigned solely to your virtual machine. With contended GPU compute, you're able to acquire a portion of a physical GPU through time-sliced access with guaranteed minimums – starting at just 10% and increasing in increments of 10%. The benefit of this approach is that if other users sharing the same GPU aren't fully utilising their allocation, you're able to burst up to the full processing power of the card, providing excellent value for workloads that aren't time-critical or that have variable demand.

For those interested in a deeper comparison of these two approaches, we covered this topic in detail in our previous article: Dedicated GPU vs Contended GPU - Get the benefits of GPU compute without the associated costs.

In the past, there was legitimate concern over performance losses with virtualised approaches when compared with dedicated servers with directly connected GPUs. However, with GPU passthrough technology, virtual machines are now able to access the full capabilities of a physical GPU as if it were directly attached to the system, bypassing the hypervisor's emulation layer and providing near-native performance. This means that the performance gap between dedicated physical hardware and cloud-based GPU compute has been effectively closed for the vast majority of workloads.

Have Any Questions About Our GPU Compute Options?

If you have any questions about our GPU compute options, or would like to have a discussion about which approach would work best for you, let us know! We'll be able to walk you through our different platforms, detail the benefits of each, and help you choose the best solution for your specific requirements and budget.

You can call us on 1300 769 972 (Option #1) or reach us via email at sales@micron21.com.